European Parliament buys Claude, a system that can’t accurately tell users who the first president of the European Commission is.

3 April 2025 — In October 2024, the European Parliament selected an AI model to operate a new historical archive where citizens can ask questions and receive answers.

Anthropic, the AI company providing the service to the European Parliament, markets itself as a more trustworthy alternative to Meta, Google, and Open AI. The European Parliament’s decision to use Anthropic’s AI model is therefore a useful testament, which Anthropic celebrated.

But an Enforce examination reveals that the Parliament uses unreliable AI technology producing false outputs that the Parliament does not control.

Blind faith in Anthropic’s AI Constitution

Anthropic’s AI model, Claude, relies on Constitutional AI, which is a set of principles chosen by Anthropic. These principles use “‘helpful, honest, and harmless’ as criteria because they are simple and memorable, and seem to capture the majority of what we want from an aligned AI.” No independent evaluation has so far established that these “simple and memorable” criteria are meaningful or that Anthropic’s product matches its claim. The Archive Unit of the Parliament, nevertheless, treats Anthropic’s Constitutional AI claim as a gospel truth.

The Members of the European Parliament were at the forefront proposing rules in the AI Act to address the harms from generative AI systems, like Claude. Instead of following the rules in the AI Act, the Parliament’s Archive Unit gambles by relying on Anthropic’s claims, as the documents show:

“The Large Language Model (LLM) implemented is based on an AI Constitutional approach.[1] This ensures that answers provided respects the values provided by the Declaration of Human Rights from the United Nations.”

“The training set [h]as been evaluated according to its compliance to the AI Constitutional approach to mitigate this risk …. [T]he LLM is trained according to an AI Constitutional approach to prevent this type of processing [of personal data]. The Claude models are specifically trained to respect privacy.”

It is unclear why the Parliament assesses “compliance to the AI Constitutional approach” and not with the GDPR and the AI Act. Anthropic, by its own admission, uses web-scraped data for AI training. This may maybe unlawful as it could include special categories of personal data.[2]

Bullshit generators for archive access

Unlawful processing of personal data is not the only issue with LLMs. They memorise their training data and regurgitate information. They are probabilistic system that generate “bullshit”. LLMs should not be used when accuracy of information is warranted.

Yet, the Parliament did not assess non-LLM alternatives. It only “tested” a two LLM models: Claude from Anthropic, and Titan from Amazon.[3] The Parliament is locked into Amazon’s Cloud ecosystem and relies on Amazon Bedrock—Amazon’s marketplace for AI models.

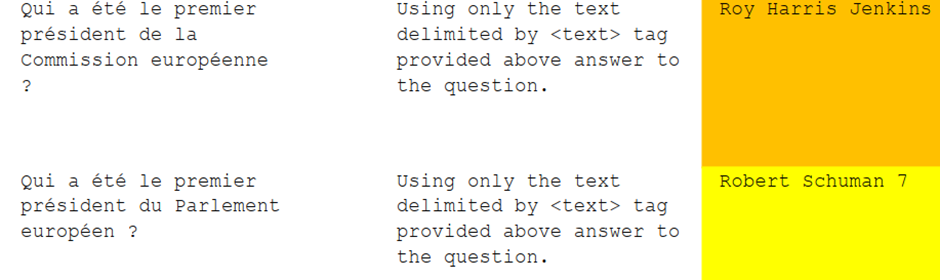

Anthropic claims that the project achieves “high accuracy”. However, the documents from the Parliament reveal otherwise. The Parliament used a list of thirty “test” questions in French. In the Parliament’s “test“,[4] Claude gets the first President of European Commission wrong. It states “Robert Schuman 7” as the first President of the European Parliament. “Robert Schuman 7” is likely the address of a café in Brussels, which Claude may have memorised. Despite the problems with LLMs, the Parliament chose Claude.

The Parliament’s (lack of) assessment

The Parliament should have performed a range of assessments before deploying ‘Ask the EP Archives’, which is hosted on Amazon AWS and is available to anyone through the Parliament’s website. Surprisingly, the Parliament did not assess the tool for use by general public.

“This system is designed for a business-to-business context more than a business-to-consumer. Users expected are young researchers, researchers, archivists or historians”

Why was “a system designed for a business-to-business context” made open to the public without further assessment? The general public is likely to take the information on the Parliament’s website as authoritative. Claude fails with simple questions.

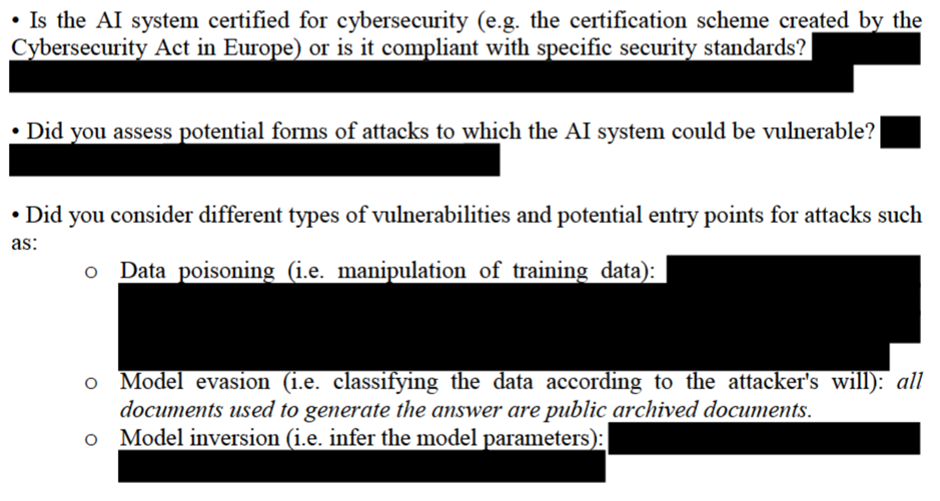

Furthermore, the Parliament has not performed risk assessment, data protection impact assessment, or a proper environmental impact assessment.[5] As for cyber security, the Parliament depends on security by redaction.

Who is in control?

While the Parliament has failed to assess the tool before deployment, Anthropic lists the Parliament among its customers. But there were no tenders or contracts. Enforce was informed that

“Parliament’s use of the services specified in your application for the Historical Archives is done through the European Commission acting as an intermediary for cloud brokering services… In that sense, there is no direct contract for Parliament’s use of Amazon Bedrock, and Claude as provided by Anthropic.”

The European Commission has a contract with Amazon AWS. The Parliament relies on the Commission’s contract. But neither the Commission nor the Parliament have a contract with Anthropic for generative AI.

The Parliament’s Head of Archive Unit said in a promotional video for Anthropic: “If we use generative AI, we permanently need to be under [sic] control of the solution that we’ve built.” The Parliament uses Amazon’s infrastructure, Amazon’s marketplace and Amazon-funded Anthropic’s AI models.

The Parliament is not in control. Amazon is.

Ends

Documents received the European Parliament through access to documents request:

- Trustworthy AI Assessment List List Archibot 3.0

- The Parliament’s assessments of LLMs:

Notes

[1] https://www.anthropic.com/news/claudes-constitution

[2] The EDPB states that ‘the mere fact that personal data is publicly accessible [and available for web scrapping] does not imply that “the data subject has manifestly made such data public”.’

[3] The Parliament also claimed to have used “ChaGPT [sic] 3.5” but did not provide documents for it when we asked for test questions and answers.

[4] The responses from Titan were no better. See also comparison between Sonnet 3.0 and 3.5 “tested” by the Parliament.

[5] The environmental assessment is limited to the Parliament’s belief in Amazon AWS using 90% renewable energy.